Composer

| Musician

| Songwriter

| Creative Director

Music & Creative

Get in touch with us at info@new.com

AI x Audio

Portfolio of AI Training & Collaboration

Over the past two years, I've integrated AI tools into my professional audio workflow—not just as a user, but as a trainer. I've utilized GPTs for audio analysis, coordinated multi-tool AI workflows for complex creative projects, and trained language models to understand nuanced concepts like perceived loudness, genre-specific mixing conventions, and audio-visual synchronization.

This portfolio showcases three examples of how I work with AI to solve real audio challenges. Each demonstrates the kind of detailed, technical communication and iterative problem-solving that's essential for training next-generation audio AI systems.

Tools Used: Runway, CapCut, ChatGPT, Claude.ai, Audio Analyzer GPT, Reason Studios,

Film Scoring Workflow

🎬 Project 1: "Science is Easy" — AI-Coordinated Film Scoring

Challenge: Score a 16-second AI-generated film clip with tempo automation synced to visual beats, requiring frame-by-frame analysis and precise musical hit points.

Approach: I coordinated between multiple AI tools to create a complete scoring workflow:

-

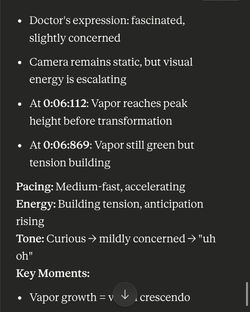

Claude.ai for frame-by-frame visual analysis with timestamps

-

ChatGPT for audio dynamics consultation

-

Audio Analyzer GPT for final mix verification

What I provided to the AI:

-

Detailed creative brief (whimsical, quirky, science theme)

-

Technical specifications (G major, 125 BPM base, chord progression)

-

Instrumentation plan (acoustic guitar, glockenspiel, brass hits)

-

19 video screenshots at 0.5-1 second intervals with timecode

What the AI delivered:

-

Segment-by-segment breakdown with pacing, energy, and tone analysis

-

Specific timestamp recommendations for musical accents (e.g., "thumbs-up at 0:02:155 should align with rhythmic accent")

-

Tempo automation suggestions mapped to visual intensity (129 BPM → 146 BPM → 155 BPM at peak)

-

Instrumentation recommendations for each emotional shift

Why this matters for AI training: This demonstrates my ability to structure complex creative problems for AI comprehension, provide detailed technical context, and iterate toward production-ready solutions. The AI learned to connect visual timing with musical structure—exactly the kind of multimodal understanding next-gen models need.

|  |  |

|---|---|---|

|  |  |

|  |

Mixing & Mastering

🔊 Project 2: Mastering Loudness Troubleshooting

Challenge: My mixes sounded clean and balanced but weren't achieving competitive loudness compared to professional references.

Approach: I used ChatGPT as a diagnostic partner, working through a complex technical problem over multiple iterations.

What I provided to the AI:

-

Detailed problem description ("My mix is clean but not loud enough")

-

Comparative context ("Other producers' beats sound crisper, exponentially louder")

-

Technical observations ("No clipping, not too much low/mid/high, but they feel weightless")

-

Reference comparison (reference beat vs. my production)

What the AI delivered:

-

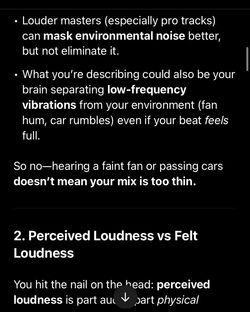

Diagnosis of multiple contributing factors (gain staging, perceived vs. actual loudness, master bus processing)

-

Specific technical solutions with values (-6dB to -3dB pre-mastering peaks, -9 to -6 LUFS targets)

-

Explanations of psychoacoustic concepts (saturation for harmonic richness, transient shaping for punch without volume)

-

Differentiation between overthinking and genuine issues (comparison table format)

Why this matters for AI training: This shows I can teach AI to diagnose subjective audio issues objectively, provide context for comparative analysis, and refine understanding through iterative questioning. These conversations create training data for AI to understand real-world mixing challenges.

|  |  |

|---|---|---|

|  |  |

|  |

🎛️ Project 3: Genre-Specific Production Consulting

Challenge: Determine appropriate hi-hat panning for a soulful R&B production with live instrumentation, balancing modern trends with organic aesthetics.

Approach: I used ChatGPT to understand current industry standards, then refined the AI's recommendations by providing specific genre and instrumentation context.

What I provided to the AI:

-

Initial question: "What's the industry standard for hi-hat panning right now?"

-

Genre clarification after initial response: "It's an R&B beat but not like r&b pop it's soul for sure I have guitars and strings and a unison octave piano countermelody electric bass acoustic type sounds"

-

Creative context: Modern bounce with nostalgic vibe

What the AI delivered:

-

Current Industry Trends: Breakdown by genre (Hip-Hop/Trap, Pop/R&B, Boom Bap/Old School)

-

Technical Specifications: Slight off-center (10-15%), alternating/stereo bounce, stereo widening techniques, super narrow/mono for minimalist styles

-

Adapted Recommendations: "Hi-Hat Placement Options for Soul/R&B" with specific percentages and reasoning

-

Context-Aware Guidance: How live instrumentation affects panning decisions ("works especially well with acoustic textures so the hats don't feel artificial")

Why this matters for AI training: This demonstrates context-aware technical consulting—teaching AI to understand genre nuance where "soulful R&B with live instrumentation" requires different treatment than trap. Shows my ability to refine AI understanding through clarification and guide it from general knowledge to specific creative application.

|  |  |

|---|---|---|

|  |  |

|  |

View my complete music and creative portfolio, featuring original compositions, production work, and additional case studies.